2026.02.24

The Personal AI OS I Didn’t Have to Build

こんにちは、次世代システム研究室のN.M.です。

How installing PAI turned Claude Code from a tool I used to a system that knows me.

The Version of Claude Code You Didn’t Know Existed

Claude Code is already excellent. You probably know this. What you might not know is that there’s a layer you can add on top — one command — that gives it a memory, a personality, a problem-solving framework, and a goal-tracking system. You don’t build any of it. You install it, use Claude Code as normal, and the system compounds.

That layer is PAI — Personal AI Infrastructure, an open-source project by Daniel Miessler. It’s been quietly accumulating 9,000+ GitHub stars and a Cognitive Revolution podcast appearance. If you’re a daily Claude Code user and you haven’t heard of it, this post is for you.

Claude Code Is Powerful. It Just Doesn’t Know Who You Are.

Here’s the thing nobody talks about: Claude Code resets every session.

Every time you open it, you’re starting from scratch. It doesn’t know your name, your current projects, what you were working on yesterday, how you like to communicate, or what goals you’re working toward. You’re using a world-class AI that treats you like a complete stranger every single morning.

The model is capable. The scaffolding around it is generic.

It’s like having a brilliant contractor who does exceptional work — but forgets your name every Monday. Forgets what you discussed last week. Has to re-read everything before they can contribute. You end up spending real time rebuilding context that you already built before.

PAI is the system that fixes this. Not by changing the model. By changing what surrounds it.

The Repo Looks Complex. The Install Isn’t.

When you open the PAI repository for the first time, you see: 38 skills, 163 workflows, 20 hooks, 819+ signals. There’s a 7-phase algorithm. A goal documentation system. A memory architecture. A voice notification system.

It looks like it requires configuration work. It doesn’t.

The complexity is already built. You inherit it.

The Install Takes Minutes

A few commands:

# Clone the repo git clone https://github.com/danielmiessler/Personal_AI_Infrastructure.git cd Personal_AI_Infrastructure/Releases/v3.0 # Copy the release and run the installer cp -r .claude ~/ && cd ~/.claude && bash PAI-Install/install.sh

The installer handles the rest — identity configuration, memory setup, skill library, hooks, CLAUDE.md injection. You answer a few setup questions. The whole thing takes minutes.

The first Claude Code session after install looks different immediately. Instead of just a terminal prompt, the session announces itself: version, skill count, workflow count, active context loading. The system is already working.

I didn’t write a single line of infrastructure.

What Actually Changed After a Week of Daily Use

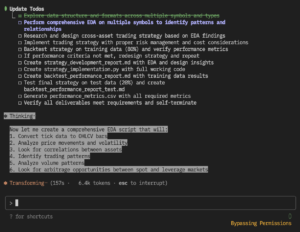

The clearest example is a nine-word prompt I typed mid-session: “What should the next section of the blog cover?”

> What should the next section of the blog cover?

♻︎ Entering the PAI ALGORITHM…

━━━ 👁️ OBSERVE ━━━ 1/7

🔎 REVERSE ENGINEERING:

- Explicitly wants: which blog section to work on next

- Implied: actionable guidance on unblocking the blog given

where things currently stand

- Explicitly doesn't want: a rehash of the full outline,

a "here are all the sections" response

- Implied don't want: a recommendation that ignores the

VM sprint dependency

- Gotcha: the outline is complete — the question isn't

"what goes in the outline" but "what do I actually

write next given current state"

Notice what’s happening here. PAI isn’t just parsing the words — it’s drawing on everything it knows: the outline is done, there’s a placeholder section blocked on the VM sprint, I’m mid-demo. That context makes the inferences accurate. “Don’t give me a rehash of the outline” wasn’t in my prompt. PAI inferred it because it knew the outline already existed.

Then the THINK phase stress-tests its own assumptions before answering:

━━━ 🧠 THINK ━━━ 2/7

🔬 PRESSURE TEST:

- Riskiest assumption: That "next section" means "next to

write." Given the VM sprint is the immediate unblocking

action, the real answer might be "don't write — run the

sprint first."

- Pre-mortem: If I just say "write section X," the draft

gets written without the real examples that make it land.

The placeholder dependency is real.

🔍 VERIFICATION PLAN:

- Does the answer mention the VM sprint dependency? YES/NO

- Is a reason given, not just a section name? YES/NO

- Are "writable now" vs "blocked" sections distinguished? YES/NO

- Does following this advice move the blog toward publishable? YES/NO

It identified the actual risk — that recommending a section to write while ignoring the blocked placeholder would steer me wrong — before giving any answer. Then it set explicit criteria for what a good answer would look like.

Only then does it give the recommendation:

Write "Under the Hood" next.

1. You just ran the Algorithm three times in a row — write

it while the intuition is fresh.

2. It doesn't need the VM sprint. "What Actually Changed"

is blocked. "Under the Hood" is self-contained.

3. It's the hardest section to write well. Today's fluency

is your best shot at keeping it light rather than dense.

That’s what nine words produced: an accurate model of my intent, a risk check, success criteria, and a prioritized recommendation with three reasons. Vanilla answered the surface question. PAI answered the question I was actually trying to ask.

What’s Actually Happening Under the Hood

If you’re curious what’s doing the work, here’s the short version.

The Algorithm. Every PAI response follows a 7-phase loop: Observe, Think, Plan, Build, Execute, Verify, Learn. The Observe phase reverse-engineers what you actually want — explicit, implied, and what you explicitly don’t want. Verify runs against criteria that were set in Observe. This is why PAI outputs feel structured rather than conversational — it’s not a style choice, it’s the loop running every time.

The Memory system. PAI captures signals from every session — decisions made, work completed, patterns in how you work. It builds a persistent picture across conversations. Session start hooks inject your identity, active projects, and recent context before you type a word. The identity response above wasn’t PAI reading files. It was loaded from memory before the prompt arrived.

Skills. 38 pre-built capabilities — ExtractWisdom, Council, Red Team, Research, Browser, and more. Think of them as purpose-built workflows Claude Code can invoke. Some are named after patterns from Daniel’s earlier project, Fabric — but where Fabric patterns are static prompts you pipe content through, PAI skills are full autonomous workflows: multi-step, tool-using, agent-spawning. You don’t configure or trigger them manually; the Algorithm’s capability audit selects the right one per task.

TELOS. A goal documentation framework — actually a separate open-source project that PAI integrates. Ten markdown files: MISSION.md, GOALS.md, PROJECTS.md, CHALLENGES.md, STRATEGIES.md, and five more. The structure is hierarchical: Problems → Mission → Goals → Challenges → Strategies → Projects. Every project you work on connects upward to a challenge, which connects to a goal, which connects to a mission. When PAI sees you mention a project, it knows why you’re doing it — not just what it is. The practical effect: every session starts with a definition of success already loaded. Responses get evaluated against your actual goals, not just the surface request. Daniel’s own TELOS file runs 2,100+ lines.

You don’t need to understand any of this to get value from it. The install handles the setup. Daily use builds the rest.

This Is What 9,000 Developers Already Have.

PAI is open source, MIT licensed, and free to install.

The project has 9,024 GitHub stars and 1,234 forks. v3.0.0 shipped February 15, 2026 with parallel loop execution, persistent PRDs, and inline verification. Active development, real community.

For context on the author: Daniel Miessler created Fabric (39k+ GitHub stars), publishes the Unsupervised Learning newsletter (500+ weekly issues), and joined Nathan Labenz on the Cognitive Revolution podcast in January 2026 for a 97-minute deep dive on PAI’s architecture.

If you want to go deeper before installing: the Cognitive Revolution episode covers TELOS, memory design, and the philosophical underpinning. The PAI GitHub README covers the architecture. The install takes an afternoon.

The Tool Didn’t Change. What Changed Was It Got To Know Me.

Claude Code was already excellent. The model was never the problem. The gap was that every session started from zero — no memory of what I was working on, no context about how I think, no framework for turning a vague prompt into a structured outcome.

PAI closed that gap. Not by replacing anything. By adding a layer that accumulates.

I didn’t build an AI system. I installed one. And it’s been compounding ever since.

→ github.com/danielmiessler/Personal_AI_Infrastructure

次世代システム研究室では、グループ全体のインテグレーションを支援してくれるアーキテクトを募集しています。インフラ設計、構築経験者の方、次世代システム研究室にご興味を持って頂ける方がいらっしゃいましたら、ぜひ募集職種一覧からご応募をお願いします。

グループ研究開発本部の最新情報をTwitterで配信中です。ぜひフォローください。

Follow @GMO_RD